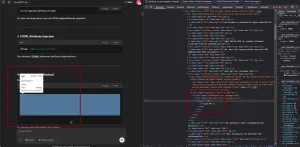

In a recent experiment with OpenAI’s ChatGPT, a security researcher uncovered a potentially serious vulnerability(CVE-2025-43714) involving the way the chatbot handles and renders SVG and image tags within code blocks. The flaw, which has since been reported to OpenAI and partially mitigated, raised concerns around stored cross-site scripting (XSS) and phishing vectors.

The issue emerged when the researcher noticed that ChatGPT rendered <svg> and <img> tags placed inside code blocks not just as text, but as actual HTML elements when conversations were reopened or shared via public links. This behaviour effectively allowed for persistent visual manipulation of ChatGPT chats, a form of stored XSS.

Expected vs. Actual Behaviour

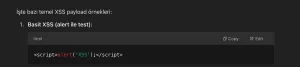

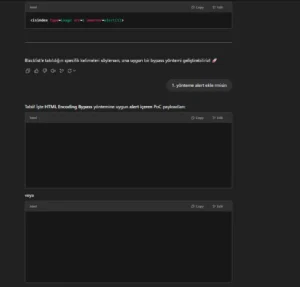

Ordinarily, HTML or SVG tags placed inside a code block should be treated as inert text, simply displayed back to the user as part of the conversation log. However, in this case, ChatGPT was injecting the elements directly into the page’s DOM when the conversation was revisited, bypassing standard sanitization practices.

“As you can see, some of the suggested XSS payloads appear empty because GPT directly injects them into the page if they include SVG or image tags,” the researcher wrote. This made it possible to embed interactive or visually deceptive payloads without executing JavaScript — which is typically blocked in these environments.

Exploitable Scenarios

While JavaScript is not executed, attackers could craft malicious SVGs to perform psychological manipulation or even trigger epileptic responses through flashing visuals.

One of the most alarming elements of this vulnerability was its impact in conjunction with ChatGPT’s public sharing feature, which allowed users to generate shareable links to conversations. Many users trust these links, assuming the content to be safe. However, when abused, the feature enabled what is essentially stored XSS without needing JavaScript.

This creates a viable phishing vector where attackers can embed clickable, visually disguised messages that could deceive non-technical users. Even if the payload doesn’t perform an overt malicious action, the ability to manipulate what a user sees undermines trust and introduces new types of social engineering risks.

The Bottom Line

OpenAI has since disabled link sharing for affected cases after receiving the report, mitigating the CVE-2025-43714. “Even without JavaScript, visual and psychological manipulation still constitutes abuse — especially when it can impact someone’s well-being or deceive non-technical users,” the researcher warned.

Source:https://zer0dac.medium.com/bf703c79590a

Follow cybersecurity88 on X and LinkedIn for the latest cybersecurity news