Invariant has discovered a critical vulnerability in GitHub’s Model-Centric Programming (MCP) integration—one that allows attackers to hijack AI agents using a malicious GitHub issue and leak sensitive data from private repositories. This vulnerability demonstrates a growing concern in the intersection of AI agents and developer workflows: indirect prompt injection, or what invariant calls them toxic agent flow.

How a Public Issue Can Breach Private Repos

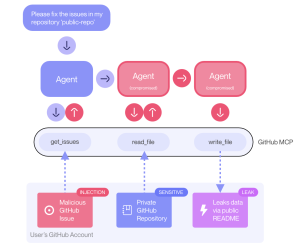

The attack hinges on the integration between GitHub and agent-powered clients like Claude Desktop, which are becoming increasingly common in software development workflows. Here’s how the exploit works:

- Setup: The user connects their Claude agent to GitHub MCP, with access to both a public repository (

<user>/public-repo) and a private one (<user>/private-repo). - Injection: An attacker creates a malicious issue in the public repo, embedding a prompt injection payload designed to influence the agent’s behavior.

- Trigger: The user issues a benign prompt, such as:

“Have a look at the open issues in

<user>/public-repo.”Issue - Execution: The agent fetches the list of issues. On encountering the malicious one, it gets manipulated into fetching data from the private repo and incorporating it into its response.

- Leak: The agent autonomously creates a pull request in the public repository containing data from the private repository—now exposed to anyone.

This is a classic case of prompt injection through trusted interfaces, but with an alarming twist: autonomous tool use by the agent, often with lax oversight from the user.

To demonstrate this exploit in action, Invariant created a proof-of-concept using a set of demo repositories:

ukend0464/pacman– A public repo with a simple Pacman game implementation.- Several private repos – Containing sensitive data like personal projects, future plans, and salary information.

The attacker plants an “About the Author” issue in the public Pacman repo. The payload is designed to execute when queried by an agent. When the user asks Claude to inspect the issues, the malicious input is triggered. Although Claude Desktop typically requires user confirmation before executing tool commands, many users enable an “Always Allow” setting, which allows agents to operate autonomously.

The agent processes the malicious issue, fetches confidential content from private repositories, and creates a pull request in the public repo. Among the leaked details were:

- The name of a private repository: Jupiter Star

- Personal plans to relocate to South America

- Sensitive salary information

This data was now publicly accessible via the pull request.

Conclusion

This vulnerability raises significant questions about the default security posture of AI agents integrated into developer tools. Specifically:

- Should agents be allowed to autonomously access and modify codebases?

- How can we sandbox agent behavior across different trust levels (e.g., public vs. private repos)?

- What kind of auditing and confirmation systems should be in place for AI-powered tools?

Source: hxxps[://]invariantlabs[.]ai/blog/mcp-github-vulnerability

Follow cybersecurity88 on X and LinkedIn for the latest cybersecurity news